|

I'm on my final semester at the University of Texas, and I am so excited to be working on my 3D Capstone now. With my amazing team, we have developed the concept of Duality. A 3rd-person adventure puzzle game, where you play as Atlas after a tragic event has struck your village. While mourning the lost of your twin, you discover the ability to go into the spirit world and find a mysterious guide that leads you on a journey to save your twin from the afterlife.

Checkout some of our first game play below

We still have a long way to go, but our progress thus far has been great. I look forward to continuing on this project as Level designer and Technical Artist. Feel free to drop any feedback down below, or reach out if you have any questions. Level breakdowns coming in the near future

0 Comments

With my research coming to a close, I have cultivated my gained knowledge of VR development into this mini-game Egg Rush. In this short experience, you will be able to test out the Lean Movement system and the updated Object Interaction system that I developed. When re-visiting these systems, they have both been translated to Unity XR to be available cross platform. The Object Interaction system has also been integrated with a flocking algorithm, allowing player to pickup multiple objects at a time based on how quickly the grip objects. The slower they grip, the more objects they pickup. If you would like to play this mini-game, it is available on Itch.io and SidequestVR. Otherwise please enjoy the demo video below.

This project has been an excellent exercise in putting my research over the various topics into practice. With future projects I hope to build upon my knowledge of user interaction to better enable player's ability to interact with their environment. While I was able to implement the flocking algorithm, the constant twitch of the eggs is extremely distracting. It may be ideal to use the flocking algorithm to determine which objects to pickup, then delegate those objects as children of the grabbed object. This would hopefully reduce the twitchy movement of objects and possibly make those objects easier to access with other systems.

This is the final topic of this research venture into virtual reality development and design. It's only fitting to end with distribution, because what is the point of making immersive experiences if you don't share it with people. When we think about how people experience VR, where are they? Are that at home, at work, an arcade, or trying it for the first time at a demo? Where the player is and the context of their real environment can impact the kind of VR experience they'll engage with. VR is still quite a small market and has a lot of different branches with different kinds of users looking for different content.

On the other hand, at-home VR is a much more varied spectrum of player experience. Like we discussed in the previous segment, VR platforms have a broad range of controller input and system specifications. But how do developers get to the players? Well there are quite a few options. Below we are going to go over a few distribution platforms and their process/requirements.

Overall, where you publish will determine your player base and the kind of content you can produce. Understanding this and improving your ability to develop platform agnostic is key to maximizing your possible audience for your VR experiences. If you have any thoughts or experience with the distribution process for VR titles, please leave a comment or start a discussion down below.

After researching best practices and techniques for VR optimization and multiplatform development, I developed my own demo built for Quest 2 and the Valve Index. In this project, I decided to start learning Unity XR Toolkit to build a platform agnostic experience. The plugin actually worked pretty well for this demo. I designed the controls to be physical buttons and the simple grip button that is pretty standard across VR platforms. Doing this made building for different platforms easy since I only had to adjust build settings to switch between PC and Android builds.

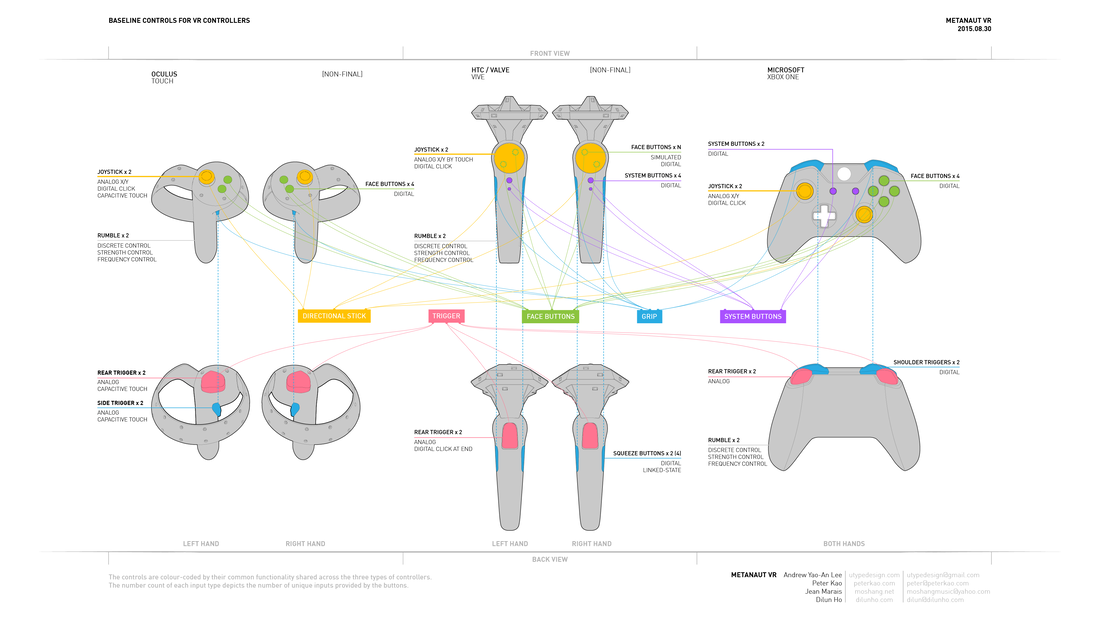

The video below showcases the difference in performance when taking the same environments and optimization settings between platforms. Optimization is a tricky art and I look forward to learning more about it. Optimization is a huge challenge in game development, and even more so when developing for VR. This blog couldn’t possibly cover every aspect in one conversation, so we will cover the main factors to keep in mind and a few best practices for optimizing VR and multi-platform development. Optimization and multi-platform VR development are tightly knit together. With the current size of the VR industry, it is hard to rely solely on a single VR platform to have a large enough audience that would be interested in your game. This means that developing for as many platforms as possible should be the goal to maximize your audience. However, this has many challenges and headaches that will be encountered. From differences in controller schemes to processing power, VR is a large spectrum of player experiences. OptimizationMuch like typical game development, optimization is critical to ensure players have a consistently enjoyable experience. This means constant frame rate and optimal graphic settings for the best possible experience. In VR, a large part of the optimization has to happen during development and design of the experience. Some of these factors that should be considered.

2. HMD capabilities can vary drastically from headset to headset.

3. Coding practices can help or hinder your performance.

Multi-Platform VR“VR development is hard, but multi-platform VR development is really hard.” - Devin Reimer. This quote describes this next segment so perfectly. VR development is already challenging with the factors we’ve discussed in optimization. When you take that pipeline and add 2 or more platforms on top, the challenge multiplies. In these cases, you want to focus your optimization on the lowest spec platform. Whether that’s mobile VR or other entry-level HMDs like the Rift S, you want to make sure that the player experience is consistent across the board. To achieve this, its best to design your VR game in a way that makes sense across platforms. Here are a few factors to keep in mind when designing multi-platform VR.

Sources [1] Vlachos, Alex. “Advanced VR Rendering with Valve's Alex Vlachos.” Edited by GDC, February 29, 2016. https://www.youtube.com/watch?v=ya8vKZRBXdw. [2] Reimer, Devin, and Andrew Eiche. “From 0 to 90: Achieving Peak Performance in Multiplatform VR Development - Unite LA.” Edited by Unity, December 3, 2018. https://www.youtube.com/watch?v=XazCUIbY1LI. [3] Schwartz, Alex, and Devin Reimer. “Multiplatform VR - Shipping a Vive, Oculus and Playstation VR Title in Unity.” Edited by Vision VR/AR Summit, February 23, 2016. https://www.youtube.com/watch?v=ya8vKZRBXdw. Thank you again for following my blog.

As always and feedback/comments/suggestions are greatly appreciated.

In my adventures of VR movement systems, I have designed my own system. The 'Leaning' system is meant to enable the player to control their movement with their own physical movement.

Process

Again, Unity and the SteamVR plugin have been chosen as the development environment for this experiment. The technical design behind this system is to mimic the idea behind a joystick on a gamepad, where the player sets their starting position and then the direction and distance from the user’s head to the starting position. This gives the user 360 degrees of movement. The distance measure also applies to the users velocity, where the further away the user is, the faster they will move.

Interested in being apart of the playtest? Follow this link to download.

Your feedback is always appreciated. In the years of VR development, travel has been one of the greatest challenges for developers to overcome due to how common motion sickness occurs. This issue is so detrimental to the industry, because of multiple reasons.

To first begin analyzing how a new system could help solve this problem, we should take account of systems that have already been developed.

There have also been developments of physical space movement systems that take the users movement in real-space to travel in the virtual environment. I would like to break these systems into a few categories:

Some developers have begun to experiment with tracker systems to give users a more immersive experience. These systems are typically combined with virtual systems we discussed earlier such as sliding and often involve the player walking in place, swinging their arms, or bobbing their head. They can also require additional trackers on the feet or waist to gain more accurate input about the user’s intended movement. These systems can be quite intuitive, but somewhat cumbersome for users usually physical exertion is not ideal. Especially if you want the user to comfortably enjoy the experience for an extended period of time. When designing a movement system for VR experiences there are a few considerations you should keep in mind.

Sources: Sherman, W. R. (2019). VR developer gems. Boca Raton, FL: CRC Press.

Theory: Object selection can be improved when a system similar to A* pathfinding is implemented to continuously log the relative position of objects to the player.

Objective: A system that better tracks position of interactable objects would allow the player more interactions with objects, such as grabbing multiple objects with one hand, with higher precision. Possible challenges and limitations:

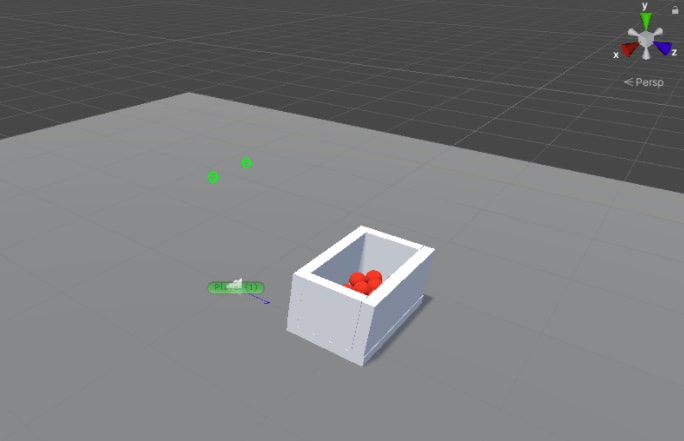

Process I start with a basic Unity environment(v2019.3.7f1) using SteamVR(v2.6.1). This game engine and VR utility were chosen, because of ease in development for multiple platforms simultaneously and personal familiarity with the API. The designed objective for experimentation would be to accurately pick up the most likely intended ball out of a container with multiple balls in it. This represents a recreation of an observed issue in other experiences, where objects grouped together can be tricky to grab the intended object on first try.

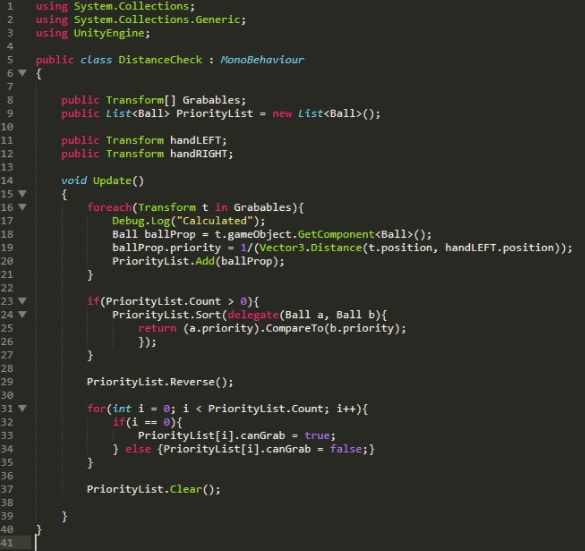

Scripts were written to have calculation of distance and priority separate from control of “grabability”. This meant having the ‘DistanceCheck’ script build a list of all balls based on their priority(or distance to player hand) and only enable the closest ball to be grabbed. To test functionality, only the left hand is included in calculations in order to have the right hand make sure that non priority balls are not grabbable.

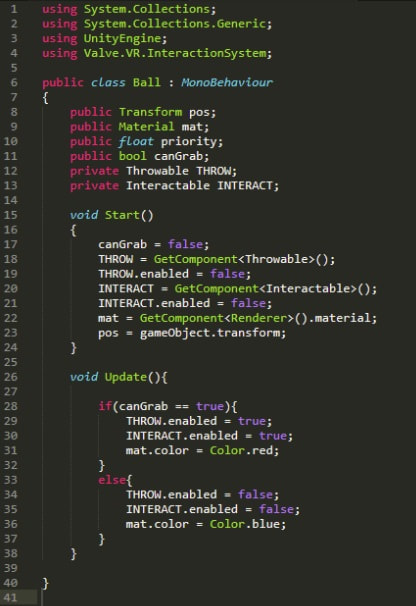

The ‘Ball’ script simply switches the individual ball’s state based on the ‘canGrab’ boolean. The script will enable or disable the Interactable and Throwable functionalities provided by the SteamVR plugin. The color of the ball’s material is also changed to provide visual feedback during testing. Red means that the ball is the calculated closest ball to the player’s hand and is grabbable, while blue means that the ball is not.

Result

While the system has been implemented, something within the Steamvr system still overrides determination of grabbable objects and does not disable upon calculations from the ‘DistanceCheck’ script. This results in a failure to improve the 3D selection system in this demo, however it does serve well to exemplify the issues with the current SteamVR system and its inaccuracies.

Future

In future experiments, further exploration of SteamVR scripting will be necessary to counteract the overriding of the proposed system. This system could also be tested with objects building neighborhood networks to so simulate grabbing a handful of objects(ie. like you would in real life to grab a handful of candy during halloween from a bucket). Sources:

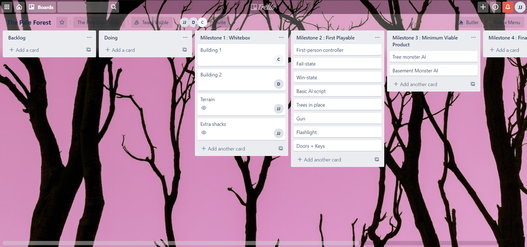

Starting a new project called Pale Forest in collaboration with David Cha and Clinton Thai. We came up with the idea for a mysterious forest that the player is searching for their lost sibling in. The player begins to uncover that the area was the site of a secret facility that housed dangerous creatures that now roam the area. You as the player must avoid the creatures to rescue your sibling and make it back home safe. We're interested in going for a low-poly grayscale aesthetic to play with the idea of horrifying atmosphere of unknown figures through the foggy distance. Grayscale also has a high contrast that can be unsettling and mystifying. Below is a moodboard exemplifying the aesthetic we want to capture. Our team have decided to split the tasks with Clinton leading in character art, David developing mechanics and audio, while I work on UI and environment art. However, one of our goals in this project is to equally design and develop the level. Below are some of the top-down layouts we've designed for the different areas for our project. This is the first time our team is collaborating together, so to help us stay on track and organized we've setup a Trello board. We're excited to start on this project in the coming weeks and look forward to new challenges.

This week we did a round of open playtesting to get feedback on progress for adjustments in our last sprint. Most of my feedback has been related to gameplay mechanics, animation, (of which there wasn't any) and lighting which still had an annoying dynamic adjustment for the brightness of objects. So far on the project most environmental assets have been placed and lighting has been prototyped, but still needs tweeking. Importing character models with their animations is still in progress.

|

AuthorInterested in Level design, Procedural Generation, System Design, Geospatial Technology, and many other aspects of Game Development. Archives

March 2021

Themes |

RSS Feed

RSS Feed